Deployment architecture for Ultra Tasks

Low-latency Ultra Tasks require a FeedMaster node that sits between clients and Snaplex JCC nodes, receiving inbound HTTP requests and routing them to pipeline instances for processing. This topic describes the supported deployment architectures, from a minimal single-FeedMaster setup to multi-region disaster recovery. For Headless Ultra Tasks, which do not require a FeedMaster, see Headless Ultra Tasks.

Low-latency Ultra architecture

The minimum configuration for a Low-latency Ultra Task consists of one FeedMaster node deployed alongside one or more Snaplex JCC nodes. The FeedMaster:

- Receives inbound HTTP or HTTPS requests from clients

- Queues requests and routes each one to an available pipeline instance on a JCC node

- Returns the pipeline output as an HTTP response to the original caller

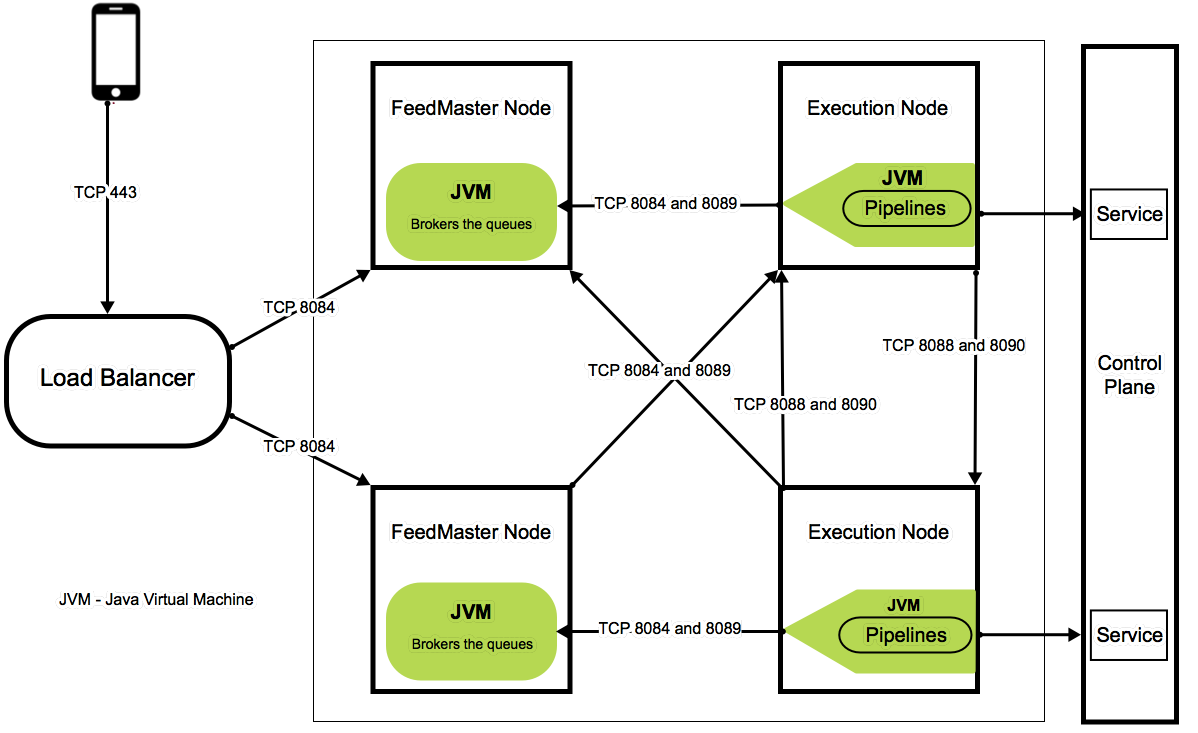

The FeedMaster uses the following ports. The server hosting the FeedMaster must have these ports available in the local firewall:

- 8084: HTTPS port for inbound client requests

- 8089: Embedded ActiveMQ broker TLS (SSL) port for JCC node communication

High availability configuration

The recommended production architecture places two FeedMaster nodes behind a load balancer. If one FeedMaster becomes unavailable, the load balancer routes requests to the other, eliminating the FeedMaster as a single point of failure.

Role of the Alias

The Ultra Task Alias field provides the stable invocation URL used with the load balancer. Configure an Alias on the Ultra Task, then point your load balancer at the Alias URL rather than a FeedMaster-specific URL. This ensures that callers use a consistent endpoint regardless of which FeedMaster node is handling requests.

Configure your load balancer to use the HealthZ URL

https://<HOSTNAME>:8084/healthz to monitor the health

of each FeedMaster. When a FeedMaster fails its health check, the load balancer

stops routing to that node automatically. For FeedMaster setup details, see

Deploy a FeedMaster Node.

The following diagram illustrates the standard high availability architecture, including component communication and port assignments.

Disaster recovery configuration

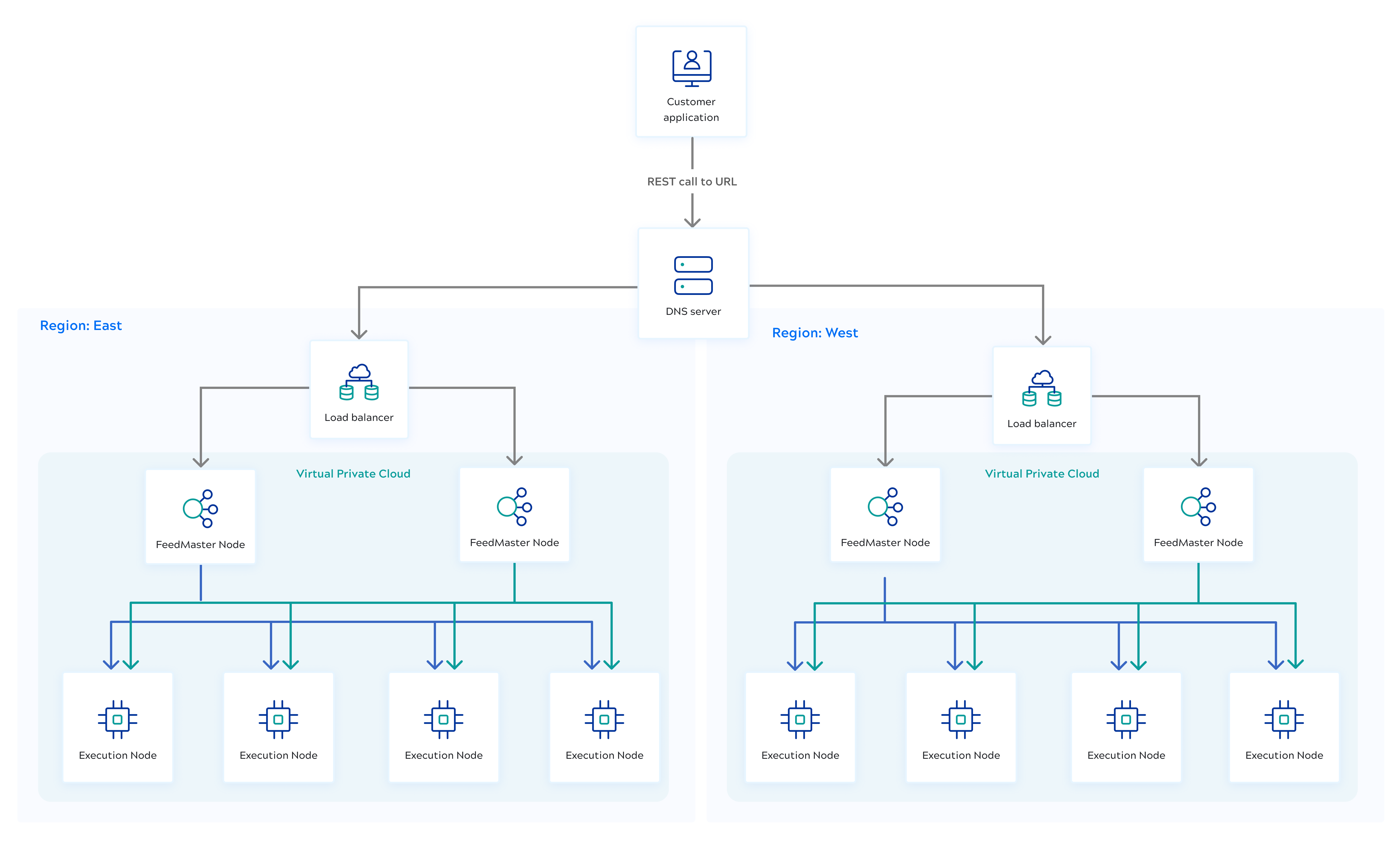

Ultra Tasks support a cross-region disaster recovery configuration using two Snaplexes in separate regions, each with two FeedMaster nodes and a load balancer. A shared DNS entry routes traffic to whichever region is operational.

Role of the Alias

Create matching Ultra Tasks in each region and assign both the same Alias value. Because both tasks share the same Alias, DNS failover can route callers to either region transparently — the invocation URL the caller uses does not change during a failover event.

Task deployment requirements for disaster recovery:

- Both Tasks must run the same pipeline and reside in the same project folder.

- Both Tasks must have the same Alias value.

- Each Task must be deployed to a different Snaplex instance.

- Tasks in each region must have unique names. Use a region-specific suffix

to distinguish them — for example,

task_name_OrgNameEastandtask_name_OrgNameWest.

DNS configuration

- Active-Active: Configure Route53 to distribute traffic across both regional load balancers simultaneously.

- Active-Standby: Configure Route53 failover routing with one region as primary and the other as standby.

This configuration requires implementation by your IT organization. Configure your load balancer or DNS to detect when a Snaplex goes offline and redirect traffic to the surviving region.

Load balancer configuration

- Configure the load balancer with FeedMaster port 8084.

- Use the HealthZ URL

https://<HOSTNAME>:8084/healthzfor health checks between the load balancers and the FeedMasters.

The following diagram illustrates the disaster recovery architecture.

FeedMaster storage limits

Because the FeedMaster node has to queue up requests, a certain amount of storage is required. The requests stay in the queue and take up space on disk until the message has been processed by the pipeline.

- The default space for queued messages is 10GB.

- The maximum storage which can be configured depends on the disk space available.

- As the storage limit is reached, the FeedMaster rejects new requests with HTTP

420,

Rate limit exceeded. - If an upstream service used by the pipeline is slow, the queue grows. Setting a higher storage limit allows more requests to queue, but also delays notifying clients about upstream slowness.

- Storage is counted per FeedMaster node. In a multi-FeedMaster deployment, each request occupies space on one node.

- All Ultra Tasks on a Snaplex share the FeedMaster disk space. The

jcc.broker.disk_limitproperty sets the maximum disk space for queued messages. The default is 10GB.- If your Snaplex uses the

slpropzconfiguration method, set this value in in Manager by specifying the limit in bytes in the Global properties field — for example:jcc.broker.disk_limit=20971520 - If your Snaplex does not use

slpropz, add thejcc.broker.disk_limitvalue to theglobal.propertiesconfiguration file.

- If your Snaplex uses the

- You can change the storage location for broker files using the properties

jcc.broker_data_dir,jcc.broker_sched_dir, andjcc.broker_tmp_dir. The default location is$SL_ROOT/run/broker. Ensure the mount has sufficient disk space and that thesnapuseraccount has full access to the data location.

When running multiple Tasks on one Snaplex/FeedMaster:

- If one Task is slow, the FeedMaster queues its requests and those callers receive errors. Other Tasks on the Snaplex are unaffected.

- If more than half the Tasks are slow, those Tasks receive errors but the others remain unaffected.

To ensure complete isolation between workloads, use separate Snaplex instances for different Ultra Tasks and configure sufficient storage for each to meet your SLAs.

Timeout between FeedMaster and clients

The timeout between the FeedMaster and the client is user-configurable. You can set

the timeout per request by passing an X-SL-RequestTimeout header

with the value in milliseconds (default: 900000). To set a global timeout for the

FeedMaster, use the jcc.llfeed.request_timeout global property.

Ultra requests are guaranteed to run at least once before the timeout expires.